Table of Contents

In the current landscape of 2026, building an AI model is no longer just about accuracy or speed it is fundamentally about trust. As machine learning integrates into critical sectors like healthcare, finance, and hiring , a single biased decision can lead to catastrophic consequences . This reality has led many developers, managers, and business leaders to ask: what purpose do fairness measures serve in ai product development?

It is a matter of ethics and common sense. A machine learning algorithm that commits biased mistakes is not only a software problem but a serious threat to brand reputation and huge lawsuits. By adopting the guidelines from Responsible AI, corporations can guarantee that their technology will be fair, explainable, and secure. The latter functions as an ethical compass that shields the company from Disparate impact.

Summary Box: The Core “Why”

The primary answer to what purpose do fairness measures serve in ai product development is that they help identify and mitigate biases in AI algorithms . They reduce the risk of lawsuits , brand damage, and customer distrust by ensuring outcomes are ethical and unbiased.

Establishing the Foundation: How Fairness Enhances Product Quality

The core what purpose do fairness measures serve in ai product development is to provide a mathematical and procedural foundation to ensure ethical, unbiased outcomes. Algorithms often inherit biases from historical datasets. Without intervention, they risk repeating past prejudices.

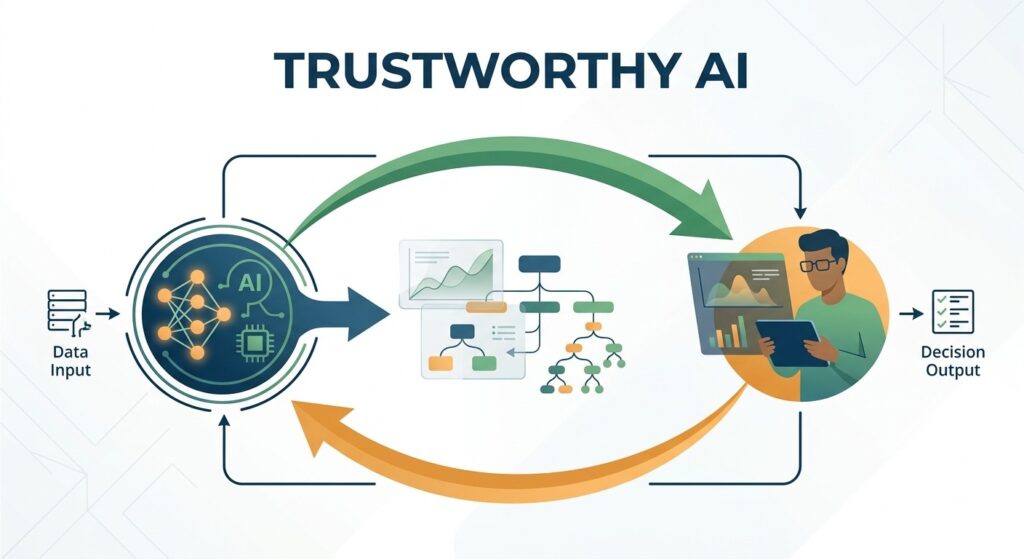

Focusing on the fairness aspect in machine learning allows the team to move away from “black box” solutions. The use of fairness metrics helps the team detect hidden biases in the data that may be layered in complicated ways. This is crucial in Ethical AI product development, which prioritizes decision making over prediction .

If a product incorporates procedural fairness , this means that both the decision-making process and the decision made are equally just. This strengthens the connection between the product and its users, understanding that the decisions made through the system do not unfairly affect any single person.

Summary Box: Building Quality

The inclusion of fairness metrics ensures the quality and reliability of the product. The objective behind them is to enable us to create algorithms that deliver impartial results for everyone.

The Technical Role of Algorithmic Fairness Metrics

To truly understand what purpose do fairness measures serve in ai product development, we must look at the math. Algorithmic fairness metrics provide the data-driven proof needed for algorithmic accountability. These metrics help engineers quantify “fairness” so it can be optimized just like accuracy or latency.

In 2026 , standard development involves several key LSI metrics :

- Demographic parity : Ensuring the likelihood of a positive outcome is equal across all groups (e.g, gender or race).

- Equalized odds : Checking that the model has equal false positive rate equality and false negative rate equality .

- Equal opportunity metric : Ensuring that qualified individuals from all groups have the same chance of a positive result.

- Predictive parity : Ensuring the probability of a specific outcome is consistent, regardless of group membership.

Technical Comparison : Statistical Parity vs Equal Opportunity

| Fairness Metric | Focus Area | When to Use It |

| Statistical parity | Overall Outcome | When you want an equal percentage of each group to get a result. |

| Equal opportunity metric | Performance for “Qualified” Users | When you want to ensure the “true positive” rate is identical across groups. |

| Individual fairness | Similarity | When you need to ensure that similar people are treated similarly. |

| Group fairness | Broad Demographics | When you are protecting specific protected classes (Age, Race, Gender). |

Summary Box: Metrics as a Lens

Algorithmic fairness metrics provide a technical roadmap for bias detection and elimination. They turn the abstract concept of “fairness” into measurable goals that developers can track in real-time.

Advanced Bias Mitigation: Preprocessing, In-processing, and Postprocessing

Another vital aspect of what purpose do fairness measures serve in ai product development is their role in the actual development pipeline. AI bias mitigation is not a single step; it is a continuous cycle that occurs at three distinct phases.

A. Preprocessing (The Data Stage)

This is where data balancing techniques come into play. If the training data is skewed, the model will be skewed. Developers use these techniques to re-weight or re-sample data to ensure a representative baseline.

B. In-processing (The Training Stage)

During the learning phase, experts use Adversarial debiasing. This involves a second model (the adversary) that tries to predict a protected attribute from the main model’s output. If the adversary succeeds, the main model is penalized, forcing it to become more unbiased.

C. Postprocessing (The Result Stage)

After the model generates a score, developers can adjust the decision thresholds to satisfy Treatment equality and Counterfactual fairness goals. This ensures the final output remains fair even if the raw model scores had slight imbalances.

Expert Insight: Authority in AI Growth

Navigating these technical complexities is what separates industry leaders from the rest. To see how these ROI-driven strategies are applied to real-world digital growth, visit https://dnexa.in/

Regulatory Guide: The EU AI Act and Compliance

In 2026, what purpose do fairness measures serve in ai product development is largely defined by the law. AI regulatory compliance is no longer a suggestion; it is a requirement. Specifically, the EU AI Act requirements have made Auditing AI for discrimination a mandatory part of the product lifecycle.

To meet these standards, companies must adopt a comprehensive AI risk management framework. These frameworks require:

- Detailed documentation of Algorithmic fairness metrics.

- Clear evidence of Bias detection and elimination efforts.

- Implementation of Explainable AI (XAI) so that decisions can be understood and audited by humans.

By following these rules, businesses avoid the “brand damage” that comes from regulatory fines and public failure.

Summary Box: Legal and Ethical Safety

Fairness measures serve as the primary tool for meeting EU AI Act requirements. They ensure your product stays on the market by providing a clear path for Auditing AI for discrimination.

The Human Factor: Explainable AI (XAI) and HITL

We must also ask: what purpose do fairness measures serve in ai product development when it comes to human oversight? Even the best metrics can’t catch every nuance. This is where Human-in-the-loop (HITL) becomes critical.

Explainable AI (XAI) allows human auditors to see the “logic” behind a machine’s decision. If a model denies a loan, XAI explains why. If that “why” is based on a biased proxy variable, the Human-in-the-loop (HITL) can intervene. This combination of human empathy and machine precision is the gold standard for Ethical AI product development.

- Treatment equality: Ensuring the standard of care/service is the same for everyone.

- Procedural fairness: Making sure the “rules” the AI follows are transparent and justified.

Summary Box: Human Oversight

Metrics are powerful, but they require a Human-in-the-loop (HITL) to interpret. Explainable AI (XAI) provides the transparency needed for true algorithmic accountability.

Common Mistakes in Implementing Fairness Measures

Despite knowing what purpose do fairness measures serve in ai product development, many teams still make errors.

- Metric Obsession: Relying on one metric (like Statistical parity) while ignoring others (like Equalized odds).

- The “One-and-Done” Fallacy: Treating fairness as a checklist item rather than a continuous part of the AI risk management framework.

- Ignoring Context: Forgetting that “fairness” in a medical AI is different from “fairness” in a social media recommendation engine.

Conclusion : Building the Ethical Future of AI

So, what purpose do fairness measures serve in ai product development in 2026 ? They are the essential bridge between a technical experiment and a sustainable, world-class product. By focusing on AI bias mitigation , staying compliant with EU AI Act requirements, and maintaining machine learning fairness , businesses build a foundation of long-term trust .

The journey toward Responsible AI frameworks is ongoing. It requires a commitment to Bias detection and elimination and an understanding that ethical outcomes drive better ROI than biased shortcuts .

Ready to Elevate Your Digital Strategy?

Developing high-conversion, ethical, and ROI-based digital products is what we excel at. Looking for someone who can guide you through the digital world of tomorrow?visit Dnexa today.

Final Action Point: Review your current AI risk management framework and ensure that Auditing AI for discrimination is a standard part of your next release cycle.

Frequently Asked Questions

1. What is fairness in AI and why is it important ?

This is the most common “entry – level” search. It addresses the basic definition and the societal impact of algorithmic decisions.

2. How do you detect bias in a machine learning model ?

Users search for this when looking for practical steps, tools, or techniques to audit their existing AI systems .

3. What are the most common fairness metrics in AI ?

People often look for specific names like Equalized Odds , Disparate Impact , and Demographic Parity to understand how to measure success .

4. Can AI ever be 100% unbiased?

A popular philosophical and technical query. It usually leads to discussions about how “unbiased” data is nearly impossible to find, and how “fairness” itself is subjective.

5. What is the difference between AI bias and human bias?

Users search for this to understand how human prejudices get encoded into “objective” code and how AI can actually scale those biases faster than humans.

6. How does data sampling affect AI fairness ?

A technical search focused on the root cause if the training data is missing certain groups, the AI cannot be fair toward them .

7. What are the legal requirements for AI fairness in 2026 ?

Developers and founders search this to stay compliant with new laws regarding automated decision-making and data privacy .

8. What are some real-world examples of AI bias ?

Users often look for case studies (like facial recognition errors or biased loan approvals) to understand the “risk” of not using fairness measures .

9. Which tools can help audit AI models for fairness ?

A tool-based search looking for libraries like IBM AI Fairness 360, Google’s What-If Tool, or Microsoft’s Fairlearn .

10. How do you balance AI accuracy vs AI fairness ?

A high-level technical query. Often, making a model “fairer” might slightly drop its overall accuracy, and developers need to know how to navigate that trade-off .